Operational Risk Management (ORM) has grown in emphasis throughout U.S. Coast Guard boat operations since the late 1990s. The 1997 Quillayute River mishap is an indelible milestone on the evolutionary path of Coast Guard risk management.

The Evolution of Civilian ORM

From an evolutionary perspective, operational risk management had to emerge in the 1970s, after a swath of deadly airplane crashes occurred involving “highly professional crews.” (1) On 29 December 1972, Eastern Air Lines Flight 401 crashed into the Florida Everglades after its aircrew lost situational awareness while focusing on a faulty instrument panel light. 99 people were killed in that accident, and even in the past 20 years, the National Transportation Safety Board (NTSB) has recorded that roughly 85 percent of aviation accidents were the result of “pilot error.” (2)

Understanding Human Factors

As accidents stacked up, experts and government officials began coming together to discuss human factors, performance, and accident statistics. 1972 gave birth to the “human factors SHEL,” for Software (aircraft control systems and instruments), Hardware (the aircraft, mechanical systems, and maintenance), Environment (the container for the whole picture), and Liveware (all the people involved). Those concerned with professional aviation realized that flying had become much more complex in that era, as evidenced by the expansion of checklists, the need for more regulations and safety procedures, and the requirement for pilots to multi-task more than ever. (1)

Research into human factors identified risky behaviors shared by pilots who were prone to having accidents. Among the list of behaviors and attitudes were disdain toward rules, falling into the category of “thrill and adventure seeking,” impulsiveness (rather than methodological analysis and decisions), and disregard for outside sources of information (including other team members). (2)

The discussion continued and in 1974, Frank Bird developed “domino theory” which stated that, “if any one domino is removed from the sequence and is strong enough to withstand impact, the expected result of the other dominoes falling is not likely to happen.” (1) In 1979, NASA ran with this concept and held a multidisciplinary summit that included airlines, aviators, scientists, and psychologists.

This synergistic event spawned the field of aviation psychology and created the concept of crew resource management (CRM). For the first time ever, the world recognized the complexity of the interior consciousness of pilots and aircrews. (1)

Many aircrews were suspicious of CRM- weary that scientists and specialists were invading their cockpits and getting into their business. United Airlines was one of the first commercial carriers to implement its own CRM program, and as more major air carriers got on board with the discussion, aircrews gradually realized that with CRM, they “weren’t losing the cockpit, but learning to manage the systems and workload more efficiently.” Managing workloads has become one of several major components of CRM. (1)

By 1990, “domino theory” evolved into James Reason’s “Swiss cheese model,” which graphically showed how the holes present in even the best-laid plans could align to make mishaps likely. Experts began to examine these “holes” and developed human factors. Drs. Scott Shappell and Douglas Wiegmann’s human factors helped better explain why human errored occur in aviation using four tiers of factors: at the top, organizational, unsafe supervision, preconditions for unsafe acts, and at the bottom, an unsafe act resulting in a mishap. CRM applies to all levels, but for operators, CRM targets the preconditions for unsafe acts. (1)

Components of Civilian CRM

The NTSB determined that many pilot-error accidents resulted from the culture of flight training programs, which often focused on the physical aspects of flying and related knowledge so that students could pass certification tests. (2) Historically attributed to experience, good judgment is now understood as its own line of development, and something that can be intentionally taught and cultivated. Aeronautical decision making (ADM) was born and has since shown decreases in judgmental errors from 10 to 50 percent among test subjects. (2)

Personal Attitudes

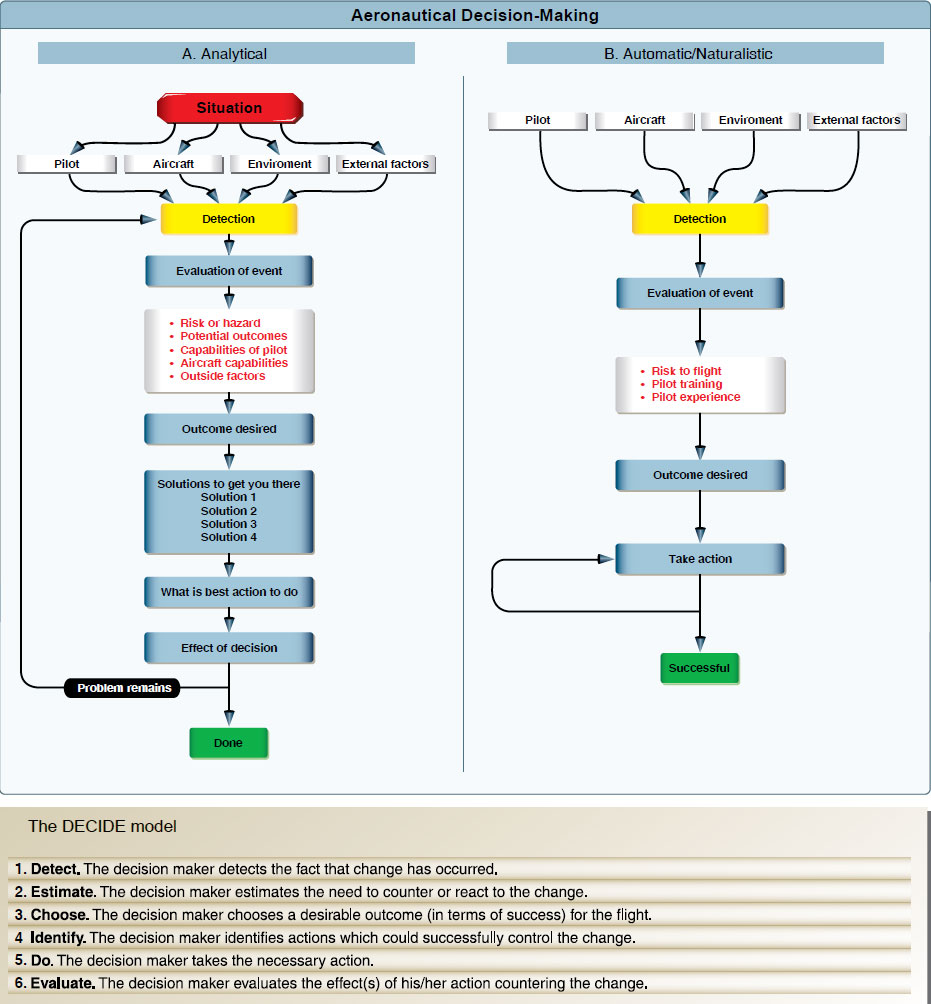

CRM became the umbrella term for a way of looking at aviation safety; ADM is a tool and a teachable framework for integrating safety into every step of the operational process. When CRM and ADM were acknowledged as clear contributors to safety in 1987, the Federal Aviation Administration (FAA) produced a wealth of related training material. Today’s ADM teaches self-awareness through prudent decision-making steps: identifying hazardous personal attitudes, learning how to modify behavior, developing risk assessment skills, utilizing resources, and evaluating the effectiveness of one’s ADM. (2) In short, ADM reduces risk by investigating how personal attitudes impact decision-making.

ADM goes a step further and introduces ways to make safe decisions. The FAA’s “DECIDE” model directs operators to Detect a change or hazard, Estimate the need to react to it, Choose a desirable outcome, Identify actions to control the change, Do the required action, and Evaluate the effects. There is also a “pre-“ component to all decisions prior to leaving the ground, and of course in-flight as circumstances change. The FAA developed the “PAVE” tool to guide pilots through a pre-assessment of risks associated with the Pilot-in-command, the Aircraft, the enVironment, and External pressures. (2)

For as long as aeronautical decision making has been studied, external pressures have been considered a major influence in the process. External pressures are anything from the desire to impress others, “get-there-it is,” or not wanting to disappoint passengers. (2)

Emergency medical service pilots face extreme external pressures and have been shown to make decisions based on the perceived pressure of their patient’s well-being. (2) These intangible judgments often overlook tangible factors like weather, limitations, or fatigue, and the cost has been numerous deadly mishaps.

Primary Concepts

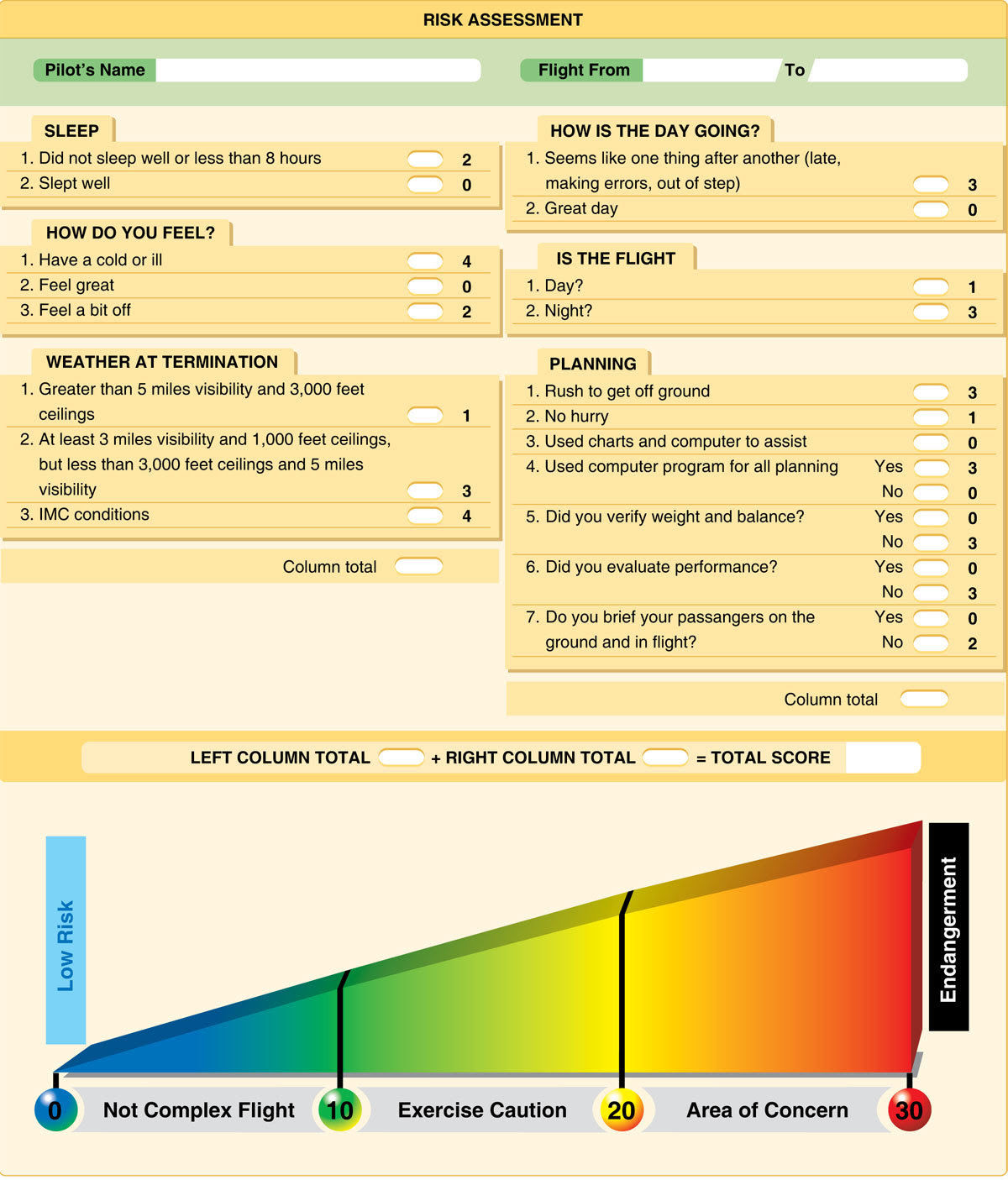

Lastly, FAA risk management includes several key concepts like situational awareness, hazard and risk identification, and rating the likelihood and severity of an accident. To simplify the process of identifying and rating hazards and risks, the FAA has created numerical assessment tools for pilots. (2)

Automatic Decision Making

Aviation CRM created a critical competency for emergent and higher-risk operations because researchers have found that people make decisions differently when crunched for time or placed in an emergency situation. Furthermore, it has been shown that using rigid analytical models under these types of circumstances may not lead to survival. (2)

Automatic or naturalistic decision making describes the style of analysis and action developed by Mr. Gary Kleinn, a noted research psychologist in this field. Mr. Kleinn found that “models of decision-making could not describe decision making under uncertainty and fast dynamic conditions.” (2)

In automatic decision making, experts first determine “whether the situation strikes them as familiar. Rather than comparing the pros and cons of different approaches, they quickly imagine how one or a few possible courses of action in such situations will play out. Experts take the first option they can find” Though their choice may not be the “best of all possible choices, it often yields remarkably good results.” (2)

Successful implementors recognize “patterns and consistencies that clarify options in complex situations” and “make [a] provisional sense of a situation, without actually reaching a decision, by launching experience-based actions that in turn trigger creative revisions.” (2)

Automatic decision making is clearly vital for those who conduct inherently risky operations (whether the operations are continuously risky, or present the possibility of sudden unexpected circumstances). Automatic decision making has been taught and integrated into professional aviation as well as Marine Corps and Army officer training. Beyond these fields, the “ability to make automatic decisions holds true for a range of experts from fire fighters to police officers.” (2)

Risk Management in the Coast Guard

Lifeboat sailors have always practiced risk management, though it hasn’t been until recently that concepts were formalized. Historians note that early U.S. Life-Saving Service Keepers always evaluated situations before responding and “never willingly sent their men out… knowing they wouldn’t come back, if for no other reason than they went out with their men.” (4) Still, the Service has changed its risk posture considerably. Until the 1930s, policy required Keepers to physically attempt a rescue method (surfboat, Breeches buoy) before ruling it out. Quite the opposite, today’s crews, Surfmen, and unit commands are much more risk-averse. (3)(4)

To anyone familiar with the Coast Guard’s formal ORM initiative, the FAA’s system looks strikingly familiar. The genealogy is rather straightforward: the FAA paved the way by investigating human factors and risk management in the 1970s and the Coast Guard began to catch up in the 1990s. (3)(5)(6)(7)

The Coast Guard’s 1999 Operation Risk Management Manual describes the service’s history with ORM: between 1991 and 1993, four major maritime mishaps (including the loss of the F/V SEA KING off Cape Disappointment, Washington in January 1991) forced the NTSB to declare a need for Coast Guard risk assessment training. (7)

Following the NTSB’s Recommendation

Given that the NTSB deals with all types of transportation mishaps, it is no surprise that they could suggest a successful aviation program to the Coast Guard. (3) In 1996 the Coast Guard held its own multidisciplinary workshop (much like NASA’s in 1979) which brought aviators, boat operators, Auxiliarists, scientists, and trainers to the table to discuss risk management. Each party “shared the same concern for developing a common risk management process” for the Coast Guard to apply universally. (7)

Early on, the Coast Guard adopted the name Team Coordination Training (TCT) for its initiatives to change the way it related to risk and worked together as teams. (3)(5) After initial implementation in 1992, TCT began catalyzing steady declines in mishap rates. Per 100,000 operating hours, boat forces saw a 40 percent decrease in mishap rates in 1994, a 66 percent decrease in 1996, and a 71 percent decrease in 1998 (all compared to the average of 1987-1992). In 1995, exportable team training was created and curriculum packages were developed (6)(7)

In 1999, the Coast Guard wrote that their ORM program, “expands a flourishing acceptance of these principles at the operational unit level… and clearly reinforces the Commandant’s direction for improved decision-making for superior performance.” (4) For the first time in the service’s history, direct emphasis was placed on “team” decisions and the importance of individual members’ assertiveness when decisions were being made poorly. This material was eventually incorporated into resident programs at training centers like the National Motor Lifeboat School. (3)(4)

While the Coast Guard worked dutifully to align itself with the leading edge of risk management protocol, the application and effectiveness of ORM was marked by deadly accidents like those at Quillayute River in 1997, Station Niagara in 2001, and Station San Diego in 2008. (3)

Basics of Coast Guard ORM

The Coast Guard’s philosophy has always been that risk may not always be “bad,” as traditional approaches assert. As an emergency response organization, it must maintain this posture in order to accomplish missions where calculated risks achieve success in marginal situations. In 1999, the Coast Guard explained that, “ORM provides the framework to minimize risk, show concern for colleagues, and maximize the unit’s mission capabilities, helping to achieve the Commandant’s direction, ‘Perform all operations flawlessly.’” (7) The first conception of TCT revolved around seven steps and seven critical skills: (7)(8)

Steps:

-Identify mission

-Identify hazards

-Assess risks

-Identify options

-Evaluate risk versus gain

-Execute decision

-Monitor situation

Skills:

-Leadership

-Mission analysis

-Adaptability and flexibility

-Situational awareness

-Decision making

-Communications

-Assertiveness

Furthermore, the Coast Guard enacted four rules for risk management and published them in the widely-referenced Boat Crew Seamanship Manual: (8)

-Integrate risk management into planning and operations

-Accept no unnecessary risks

-Make decisions at the appropriate level

-Accept risks if benefits outweigh costs

Tools

To ground these concepts of TCT, the Coast Guard published a variety of tools. To identify hazards, there was the “PEACE” model (Planning, Event complexity, Asset selection, Communications and supervision, AND Environment); for identifying mitigation options, the “STAAR” model (Spread out, Transfer, Avoid, Accept, Reduce). Both of these models were wrapped up into one package, the “GAR” (General Assessment of Risk) model. Crews used the GAR framework as a discussion guide to account for hazards and mitigation strategies before leaving for a mission. (7)(8)

GAR covered each of the parts of the PEACE model and assigned each category a risk score from 0-10, with 0 as slight and 10 as very high. Once a total score was computed, it was placed on the GAR scale: 0-23 (green) was “no risk”, 24-44 (amber) was “caution,” and 45-60 (red) was “high risk.” (7)

The scoring process was supposed to afford crews an opportunity to identify ways to lower scores and make the mission safer. If risk was too high, a go/no-go decision was made. Before leaving the dock, boat crews radioed their GAR scores to the station. (7)

Coast Guard Risk Management Today

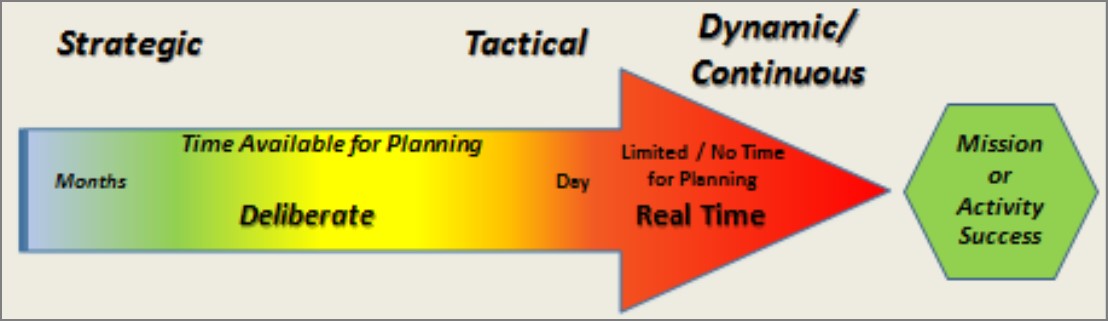

In 2018, the Coast Guard dropped the “Operational” part of ORM and stuck with RM instead. This change reinforced the expectation that risk management be incorporated in all activities, including non-operational ones. (7) The switch to RM also included a mandate for unit-level risk management on several different time scales: dynamic/continuous (during a real-time operation), tactical (during daily briefs), and strategic (during annual reviews of likely risks). (5)

With this rollout of changes, the Coast Guard specialized risk management somewhat, including separate aviation, cutter, and boat forces risk models, as well as newer concepts like “Bridge Resource Management” (for larger ship’s bridge teams) and “Maintenance Resource Management.” (5)(9)

The 2018 update also truncated the former seven-step RM process to five steps:

-Identifying hazards

-Assessing hazards

-Developing controls and making decisions

-Implementing controls

-Supervising and evaluating controls

Boat crews felt one change most, however: a new “GAR 2.0”. GAR 2.0 still combines the PEACE and STAAR models and serves as a discussion tool, but dropped the numerical scoring. GAR 2.0 scores are reported as low risk, medium gain, for example. (5)(10)

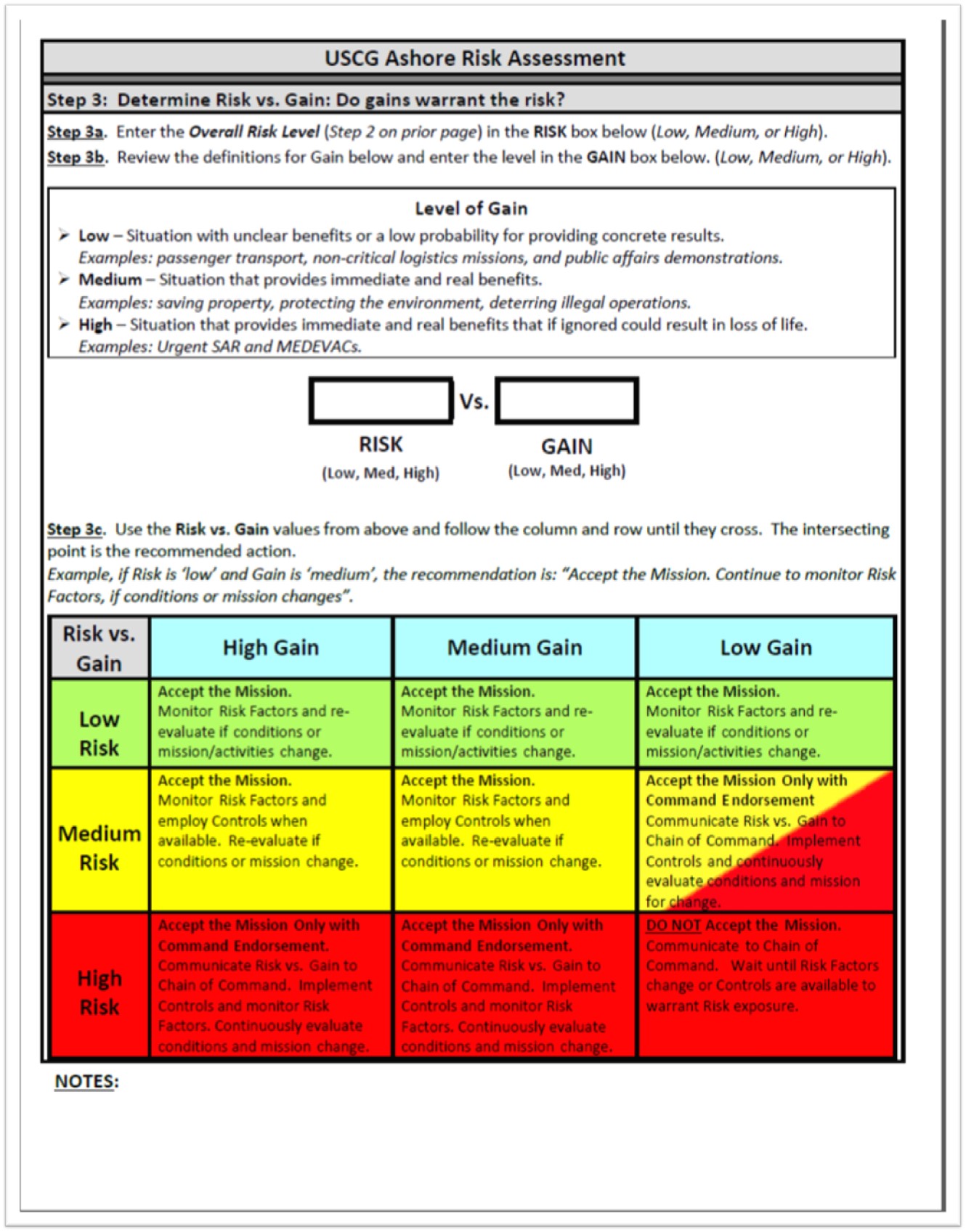

Since GAR was first established, program managers noticed that numerical scores received emphasis while the risks themselves were deemphasized (for example, boat crews often tried to find ways to “mitigate” a score down into the green range). (5) After “extensive testing and validation,” it released GAR 2.0 and promised a model with greater accuracy and effectiveness. (10) GAR 2.0 acknowledges that some activities are simply higher risk and mitigation may be limited. Therefore, it introduces clearer ways to weigh risk versus gain and ensure that the decision to conduct the mission is made at the proper level (5)(8). If risk is very high, a decision matrix directs crews to discontinue the mission or seek command approval.

Because of Quillayute River…

The phrase, “Because of Quillayute River…” remains in the vernacular within the Coast Guard’s small boat community. While changes did stem from the 12 February 1997 tragedy, these events have been viewed both as the result of inadequate organizational RM initiatives as well as the impetus for later improvements. (3)(4)

Case Study

When the CG 44363 administrative investigation was completed, it directed several RM-related changes. First, it mandated that “all small boat crew members attend Team Coordination Training,” and that the specifics of the CG 44363 accident be used as a servicewide case study. The Chief of Staff’s final decision letter also ordered that the Boat Crew Seamanship Manual be updated with a requirement for crews to conduct thorough pre-underway briefs. (3)(11)

The Coast Guard took efforts to teach some of the lessons of the CG 44363 mishap through a 1998 modified case study called, “On Rocky Ground.” Using a pseudonym for the station and anonymous crewmembers, the case study recounted the Quillayute River mishap almost verbatim. While covering the synopsis closely, it omitted several pertinent details and added other facts that were not published in the official administrative investigation. Initially, some station crews were displeased with the way the case study was taught by visiting instructors, some of whom were civilian volunteers from the Coast Guard Auxiliary. To some, the presentation focused on coxswain errors and seemed to say, “the surf community messed up in 1997.” (3)(4)(12)

Urgency

Official analysis of the mishap also emphasized the judgment that BM2 Bosley “moralized the urgency of the search and rescue case without adequately weighing the risks.” (3)(11) In essence, Headquarters viewed his decision-making process as being similar to the old Life-Saving Service credo that, “You have to go out, but you don’t have to come back.” (3)(11)(13) Service initiatives to integrate TCT into all levels of operations, from 1997 to the present, have sought to address this specific human factor and change crews’ priorities to an “us, ours, them, theirs” hierarchy (from greatest to least priority: the Coast Guard crew, the Coast Guard asset, the would-be victims, and their property). (3)(5)(7)(10)

The tragedy at Quillayute River continues to bounce around among boat forces personnel, but with less freshness; it is now an “old,” watered-down story. Given the technological improvements in the intervening period, some station crews relate to the mishap as if “QR happened in a different era.” While over twenty years have gone by and equipment has changed, the context and events of 12 February 1997 are still dramatically relevant; crews will probably always feel the same urgency when the “SAR” alarm sounds. Humans can always make mistakes. (3)(4)

Since the 1970s, credible research has shown that the accelerating complexity and the risks of modern operations continue to place great demands on crews. It is now understood that proven, deliberate skills and tools can be taught and standardized: risk management can be “downloaded” into individuals and teams. As such, the Coast Guard’s efforts to understand its operational risks, to evolve its culture, and to develop and revise risk assessment tools continue to be of vital importance. (3)(5)

References

(1) “The History of CRM (Crew Resource Management).” 2012, www.youtube.com/watch?v=Tpx3e1kCMCA&list=WL&index=123&t=0s

(2) FAA Risk Management Handbook. ser. FAA-H-8083-2, 2009.

(3) Noble, Dennis L. The Rescue of the Gale Runner. University Press of Florida, 2002.

(4) Interviews with active duty and retired members, grades E-5 to O-6: Surfmen, investigation members, Officers-in-Charge, and Commanding Officers

(5) Risk Management Manual. ser. CIM3500.3A, 2018.

(6) Team Coordination Training. ser. CI 1541.1, 1998.

(7) Operational Risk Management Manual. ser. CIM3500.3, 1999.

(8) U.S. Coast Guard Boat Crew Seamanship Manual. ser. CIM16114.5C, 2003

(9) Patraiko, David. “Bridge Resource Management: Working as a Cohesive Team.” The Navigator, Oct. 2014.

(10) U.S. Coast Guard ACN 030/18: “MAR 2018 Promulgation of Risk Management Commandant Instruction”

(11) CDR Hasselbalch, James M. Investigation into the Capsizing and Subsequent Loss of MLB 44363 and the Death of Three Coast Guard Members That Occurred at Coast Guard Station Quillayute River on 12 FEB 1997. March, 1997 (including reviews by RADM J. David Spade and ADM Robert E. Kramek).

(12) Team Coordination Training Exercises & Case Studies. ser. G65302, 1998.

(13) Noble, Dennis L. Lifeboat Sailors. Potomac Books, 2001

cover: U.S. Coast Guard photo